Guide Labs, a startup based in San Francisco, has introduced a groundbreaking solution to the challenge of understanding deep learning models. The company, founded by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, recently made their 8-billion-parameter LLM, Steerling-8B, open source. This model, trained with a unique architecture, aims to make the actions of the model easily interpretable by allowing every token produced to be traced back to its origins in the training data.

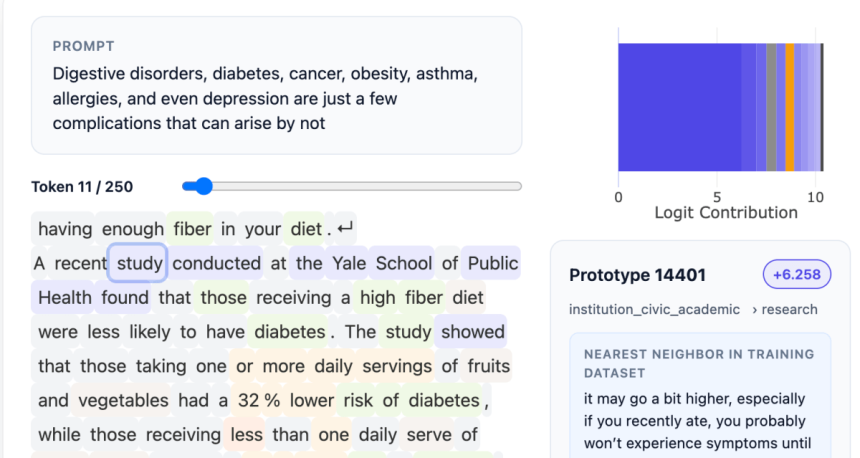

The significance of this approach lies in its ability to shed light on why the model behaves the way it does. Whether it involves referencing materials for facts cited by the model or delving into complex concepts like humor or gender, Steerling-8B provides a transparent view into the inner workings of the neural network.

Julius Adebayo, who initiated this work during his time at MIT, highlighted the shortcomings of existing methods for understanding deep learning models in a 2018 paper. This led to the development of a novel way of constructing LLMs, incorporating a concept layer that categorizes data for traceability. While this approach requires upfront data annotation, leveraging AI models for assistance enabled the training of the largest proof of concept model to date.

By engineering the model from the ground up with interpretability in mind, Guide Labs aims to eliminate the need for post hoc analysis typically associated with neuroscience-based interpretability methods. This shift in perspective offers a more direct and efficient way of understanding the model’s decision-making processes.

Despite concerns that this approach may limit the emergence of novel behaviors in LLMs, Adebayo assures that the model still retains the ability to discover new concepts autonomously. This balance between interpretability and adaptability ensures that the model remains versatile and insightful.

The implications of this interpretable architecture are far-reaching. From consumer-facing applications that require content moderation to regulated industries like finance that demand transparency in decision-making, Steerling-8B offers a versatile solution for a wide range of use cases. Additionally, in scientific endeavors such as protein folding, where understanding the rationale behind model predictions is crucial, Guide Labs’ technology provides valuable insights.

Looking ahead, Guide Labs plans to expand their offerings by developing larger models and providing API and agentic access to users. By democratizing inherent interpretability in deep learning models, the company aims to empower users to make informed decisions and foster trust in AI systems.

In conclusion, the shift towards interpretable models marks a significant advancement in the field of artificial intelligence. As Guide Labs continues to innovate and refine their approach, the prospect of transparent and reliable AI systems becomes increasingly attainable for a wide range of applications.