“There are two things best left unseen as they come into being: sausages and econometric estimates. This unfortunate reality reflects a troubling lack of scientific rigor in our field. It seems that scarcely anyone takes data analysis at face value—or perhaps more accurately, no one trusts anyone else’s data analysis.

This biting commentary comes from economist Ed Leamer, who issued a clarion call in his seminal 1983 article “Let’s Take the Con Out of Econometrics.” His assertion was that researchers are acutely aware of the unreliability of their peers’ estimates due to the arbitrary decisions made during the research process. Yet, for a significant portion of the subsequent decades, the educated public largely accepted peer-reviewed studies as credible.

However, this confidence began to wane following physician John Ioannidis’ influential 2005 article “Why Most Published Research Findings Are False.” The rapid emergence of concerns during the “replication crisis” throughout the 2010s, fueled by social media, cast a shadow over the integrity of published research. The field of psychology was the first to feel the sting, particularly following the 2011 publication of “False Positive Psychology.” Economics and other social sciences were not immune to this scrutiny.

At the heart of the scientific methodology lies the principle of replicability. When a scientist conducts an experiment to measure a fundamental constant, such as the speed of light, the expectation is that others, provided with sufficient documentation, should be able to replicate the experiment and obtain identical results. If a lab’s findings cannot be duplicated, one might conclude, much like the dubious claims of cold fusion, that they lack validity.

While we do not anticipate the same level of precision outside the hard sciences, the expectation remains that research should yield usable insights. For example, if one trial indicates a drug reduces heart attacks by 17% while another reports a reduction of 14%, that is an understandable variance. Yet, if initial trials support a drug’s efficacy while subsequent trials find it ineffective, it raises serious questions about the drug’s reliability.

In the realm of social science, researchers have, for years, produced findings akin to endorsements for a drug that ultimately proves ineffective or detrimental. For instance, a 2015 replication attempt led by Brian Nosek sought to reproduce 100 experiments published in prominent psychology journals and discovered that less than half yielded statistically significant results. Similarly, a Federal Reserve discussion paper from the same year reported disheartening results for published economics research.

If we cannot rely on peer-reviewed studies from leading journals, what should we consider credible? Since 2015, many have concluded “nothing,” or have resorted to a blend of common sense and preconceived ideological beliefs. Yet, reforms initiated in the wake of the replication crisis may finally be yielding a crop of replicable and reliable research.

The U.S. military, among other institutions, had been using social science research to inform its strategies. Doubts arising from the replication crisis prompted action. The Defense Advanced Research Projects Agency (DARPA), known for sponsoring groundbreaking technologies such as the Internet and autonomous vehicles, funded Brian Nosek and the Center for Open Science to carry out an extensive replication study across the social sciences. This initiative aimed to assess the reliability of existing research and identify common characteristics of more trustworthy studies.

The findings from this comprehensive effort were recently published in a special issue of the journal Nature. Hundreds of researchers, including myself, collaborated to replicate various claims from studies appearing in top social science journals. Overall, we observed a positive trend from a dismal starting point. For instance, while it remains common for papers not to share the data or code that supposedly underpins their findings, the likelihood of such sharing has increased significantly since 2009, the onset of our study period.

Figure 1: Data and code availability by year of publication

Source: Nature

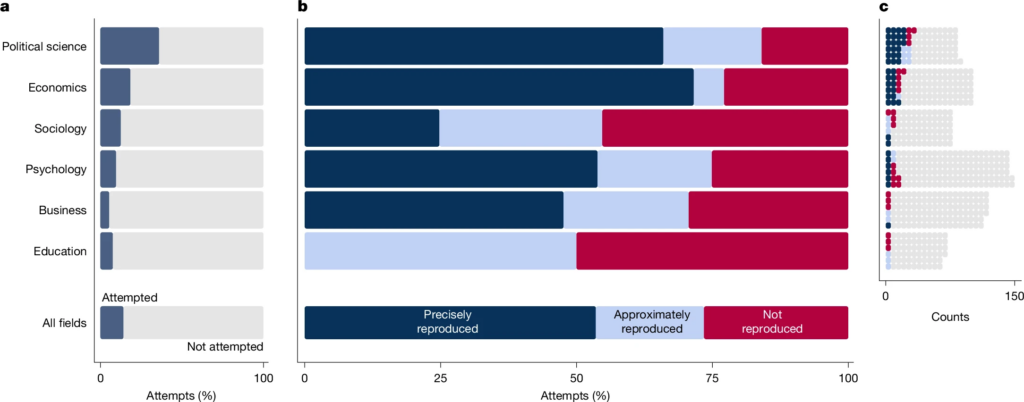

Economics and political science appear relatively favorable in this regard, with approximately half of articles sharing data or code, in stark contrast to the less than 10% in the field of Education. Additionally, economics also exhibited commendable “reproducibility,” with a significant majority of articles meeting this minimal standard. Reproducibility means that if other researchers apply the same dataset and methods as those described in a published article, they should arrive at the same conclusions. In economics, this occurred 67% of the time, surpassing all other fields analyzed.

Figure 2: Reproducibility by Field

Source: Nature

This benchmark is considered low, as it merely indicates that the original researchers documented their procedures sufficiently for others to replicate them—not necessarily that their findings were accurate. Conversely, if the documentation is inadequate, it doesn’t automatically imply their results are erroneous. So, how can we ascertain correctness?

Additional studies from the Nature issue examine how sensitive results are to methodological variations. When multiple plausible data analysis methods exist, did the original researchers fortuitously opt for the one yielding statistically significant results, or would most reasonable approaches lead to similar conclusions?

In this context, most studies could be deemed “directionally correct.” Of those subjected to robustness testing, 74% yielded statistically significant results consistent with the original findings, while only 34% replicated effect sizes closely matching the original estimates.

When researchers attempted to replicate claims using new datasets (not just by applying different methods to existing data), only half produced statistically significant results aligned with the originals, and the magnitudes of these effects were generally less than half of those reported initially.

This collectively suggests that published social science research often inflates the size of observed effects and sometimes asserts the existence of effects that are non-existent. While this is far from an optimal situation, relying on research still proves more beneficial than mere chance. For instance, robustness checks indicated that significant effects contradicting the original paper were found only 2% of the time.

What implications does this hold for research consumers? It has always been prudent to regard entire bodies of literature with greater skepticism than individual studies. For economics, the Journal of Economic Perspectives effectively distills areas of research into accessible summaries.

As a novel guideline inspired by the Nature papers, consider this: “halve the estimated effect sizes.” If a study claims that a college degree boosts wages by 100%, it’s more likely that the actual increase is around 40-50%. John Ioannidis warned in 2005 that “most published research findings are false.” By 2026, we seem to have evolved to the point of acknowledging that “most published research findings are exaggerated.”