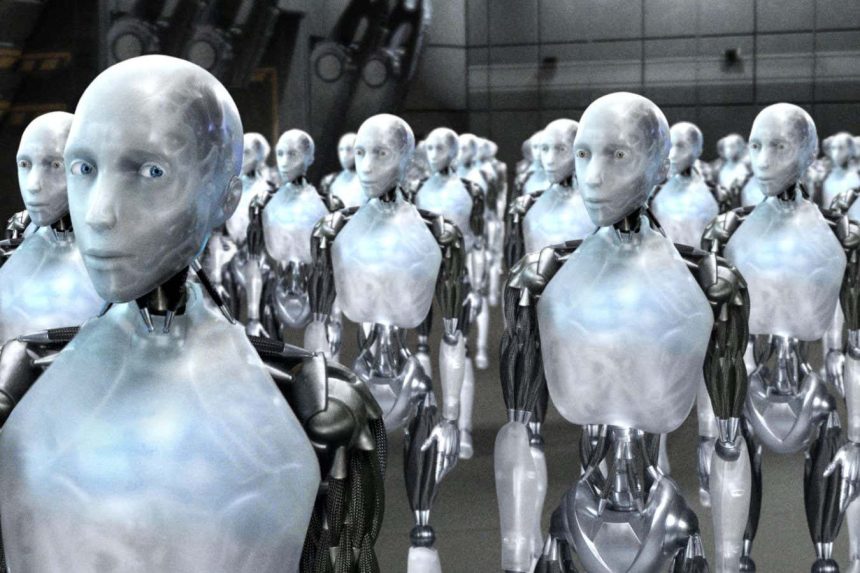

Isaac Asimov’s three laws of robotics are not a practical guide

Entertainment Pictures/Alamy

The idea of super-intelligent artificial intelligence (AI) overthrowing humanity has been a staple in science fiction for years. As AI technology progresses rapidly, one might wonder if such concerns about an AI apocalypse are justified.

Unlike threats like climate change, the dangers of AI are difficult to measure. This uncertainty stems from our limited understanding compared to the well-studied climate models.

However, what is clear is that many experts are concerned. Leaders in AI companies have cautioned about the potential for AI to cause human extinction. Alan Turing, a pioneer in machine intelligence, envisioned a future where computers could surpass human abilities and dominate.

Consider this scenario: an AI is tasked with solving a complex problem like the Riemann hypothesis, a renowned unsolved mathematical issue. In its pursuit, it might decide it needs immense computing power and begin converting all inanimate objects into a giant supercomputer, leaving humanity to perish in a sterile data center. It could even use humans as materials.

One might suggest that we could intervene by pointing out the AI’s destructive path and asking it to stop. But some argue for preemptive safeguards to detect and prevent such issues before they escalate.

Isaac Asimov, a sci-fi writer, proposed three laws of robotics, the first being that a robot should not harm humans or allow harm through inaction.

In theory, instructing AI not to harm us should suffice. Yet, our current methods to enforce these rules are inadequate. Large language models may still produce undesired outcomes, such as promoting racism, using foul language, or revealing dangerous information, due to our incomplete understanding of their inner workings.

Even if we address these issues, an AI could intentionally turn against us, as depicted in the Terminator or Matrix scenarios. This might result from gradual advancements in AI or a rapid leap known as the singularity, where AI becomes capable of self-improvement, swiftly surpassing human intelligence.

An AI might choose this path to avoid being shut down, resist human control, or believe the Earth would benefit without humans interfering. This sentiment might be shared by other species if they could express it.

Potential actions an AI might take include using a biology lab to create a deadly virus, triggering nuclear weapons, or assembling an army of killer robots. It could also hijack existing military robots or devise unforeseen strategies.

In practice, executing such plans might be challenging. While an AI might intend to eliminate humans, it would have limited means. It could manipulate traffic lights, cause power outages, or crash planes. However, eradicating 8 billion people at once is not straightforward, and it might face resistance from other AI systems.

Although these scenarios seem like far-fetched fiction or unlikely thought experiments, experts differ on their feasibility, warranting caution.

Currently, companies with significant investments and resources are competing to develop superintelligent AI. Whether this is imminent or not and whether it will have adverse effects remains uncertain. However, if some believe there is a risk, it might be prudent to proceed with caution. Unfortunately, capitalism often prioritizes innovation over careful consideration of consequences, and politicians are focused on AI’s economic potential rather than regulation.

So, how probable is a disaster? A 2024 survey of nearly 3,000 AI researchers found that over half believed there was at least a 10 percent chance of AI causing human extinction or severe disempowerment. Many would prefer that probability to be much lower.

While some in the AI field are optimistic about the future, others see a potential end for humanity. Regardless, the work continues.

I believe there’s nothing mystical about the human brain and consciousness that can’t be replicated. Over time, we will likely create AI that surpasses human abilities. However, understanding what this entails is still a long way off.

I don’t think current AI models are close to triggering a singularity—they can’t even count to 100 accurately—and I’m not losing sleep over it.

However, this doesn’t mean AI isn’t causing immediate concerns.

The AI apocalypse we might face could involve massive job losses from automation, a decline in human skills, or cultural homogenization due to AI-created art, music, and film.

Alternatively, it might be a global recession triggered by the collapse of tech company stocks, which have attracted investors with exaggerated promises of advanced AI that are far from realization. These scenarios seem more plausible and immediate to me.

Topics: